New Yorkers marched in the streets last summer demanding an end to biased law enforcement practices. Our government responded by passing new laws to root out systemic racism and unjust policing practices.

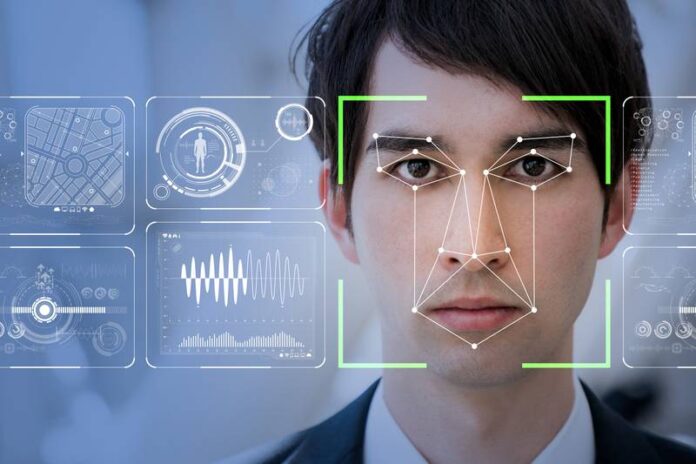

A debate is raging in New York right now about one of these unjust practices: facial recognition. Studies show this technology is inaccurate and error-prone, especially when dealing with Black, Latinx or gender nonconforming people. It exacerbates bias in law enforcement — and we must prevent its use in New York.

Let’s be clear, facial recognition errors are not some abstract harm. A facial recognition error could lead to a New Yorker being wrongfully stopped on the side of the road, taken from his or her family, put in a cage, and charged with a crime that he or she did not commit. Facial recognition means facing the risk of a police stop at the very moment that we know just how dangerous a police encounter can be. A facial recognition error could quickly escalate into not just a pair of handcuffs, but even a knee to the neck.

We ought to celebrate Minneapolis for protecting civil rights. When Minneapolis became the 14th city to outlaw facial recognition, it was particularly poignant. In the city where George Floyd was killed, biased police technology that put residents at risk was outlawed. New York needs to do the same.

The NYPD has proven that it can’t be trusted to use this sort of invasive spy tool. Even when used properly, facial recognition has real risks, but the NYPD has not used their system properly — not even close. In the past, this has meant officers ran facial recognition searches on everything from celebrity look-alikes to hand-drawn sketches. Can’t find a facial recognition match for someone who looks like the subject? That’s fine, just find an image of an actor who looks similar, as the NYPD has done.